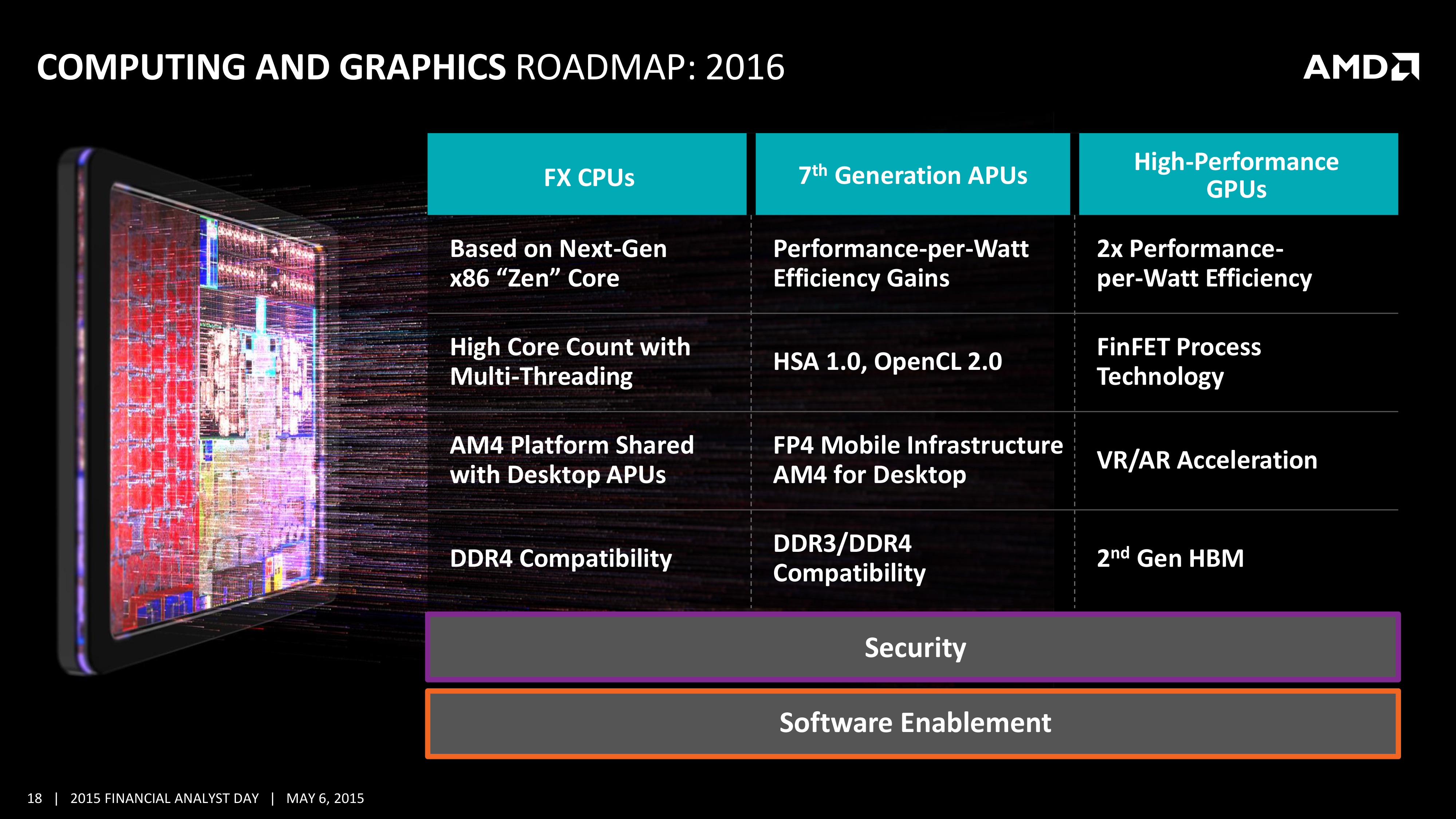

I read the leaves said this about 3 years back.Ībout two years ago I said, "You're going to see an APU with a chiplette for CPU, a chiplette forGPU, an IO Die, and HBM package that is part of unified memory dedicated to graphics calls." AMD was a big proponent of heterogeneous architectures working together. I looked at HSA years ago, and looking at AMD's scalable architecture, new memory types and made a very good guess where it was going. DOE's exascale-class supercomputers (the broader server ecosystem often adopts the winning HPC techniques), but Nvidia hasn't made any announcements about such wins, despite its dominating position for GPU-accelerated compute in the HPC and data center space.

Is this type of architecture, and the underlying unified programming models, required to hit exascale-class performance within acceptable power envelopes? That's an open question, but while Nvidia is part of the CXL consortium which should offer coherency features, both AMD and Intel have won exceedingly important contracts for the U.S. Meanwhile, Nvidia might suffer in the supercomputer realm because it doesn't produce both CPUs and GPUs and, therefore, cannot enable similar functionality. It will be interesting to learn more about the differences between the two approaches as more information trickles out. Intel's approach leans heavily on its OneAPI programming model and also aims to tie together shared pools of memory between the CPU and GPU (lovingly named Rambo Cache). Department of Energy's (DOE's) Argonne National Laboratory. In either case, it's clear the core tenets of the open architecture live on in AMD's new proprietary implementation, which likely leans heavily on its open ROCm software ecosystem that is now enjoying the fruits of DOE sponsorship.ĪMD has blazed a path in this regard and secured big wins for exascale-class systems, including the recent El Capitan supercomputer that will hit two exaflops and wields the new Infinity Fabric 3.0, but Intel is also working on its Ponte Vecchio architecture that will power the Aurora supercomputer at the U.S.

While AMD still appears to be a member of the HSA foundation, it no longer actively promotes HSA in communications with the press. This complex process adds latency and incurs a performance penalty, but shared memory allows the GPU to access the same memory the CPU was utilizing, thus reducing and simplifying the software stack.ĭata transfers often consume more power than the actual computation itself, so eliminating those transfers boosts both performance and efficiency, and extending those benefits to the system level by sharing memory between discrete GPUs and CPUs gives AMD a tangible advantage over its competitors in the HPC space. This requires the CPU to pass the data from its memory space to the GPU memory, after which the GPU then processes the data and returns it to the CPU. Much like the approach of extending an Infinity Fabric link between the CPU and GPU, HSA provides a pool of cache-coherent shared virtual memory that eliminates data transfers between components to reduce latency and boost performance.įor instance, when a CPU completes a data processing task, the data may still require processing in the GPU. Leveraging cache coherency, like the company does with its Ryzen APUs, enables the best of both worlds and, according to the slides, unifies the data and provides a "simple on-ramp to CPU+GPU for all codes."ĪMD also provided some examples of the code required to use a GPU without unified memory, while accommodating a unified memory architecture actually alleviates much of the coding burden.ĪMD famously embraced the Heterogeneous Systems Architecture (HSA - deep dive here) to tie together Carrizo's fixed-function blocks, touting that feature among its marketing materials.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed